AI TL;DR

Understanding the shift from conversational AI to agentic systems. Deep dive into n8n 2.0, LangGraph, and how multi-agent workflows are transforming software development, customer service, and enterprise operations.

For three years, we lived in the Chatbot Era. We asked AI questions. It gave us answers. It was a fundamentally passive relationship—humans initiate, AI responds.

Welcome to 2026. The paradigm has shifted.

We are now entering the Agentic Era, where AI is no longer just a "knowledge engine" but an "action engine." It doesn't just write the email—it finds the recipient, checks their communication preferences, adjusts the tone, sends it at the optimal time, updates the CRM, and follows up if there's no response.

This comprehensive guide explores the technology, tools, and implications of agentic AI—the defining trend of 2026 and the foundation for how work will be done for the next decade.

What Is Agentic AI?

Agentic AI refers to autonomous AI systems that can pursue complex goals with limited human supervision. Unlike a standard Large Language Model (LLM) which predicts the next token, an agentic system operates in a loop:

┌─────────────────────────────────────────────────────────────┐

│ AGENTIC AI LOOP │

├─────────────────────────────────────────────────────────────┤

│ │

│ ┌─────────┐ ┌─────────┐ ┌─────────┐ ┌─────────┐ │

│ │ PERCEIVE│───►│ REASON │───►│PLAN/ACT │───►│ OBSERVE │ │

│ └─────────┘ └─────────┘ └─────────┘ └─────────┘ │

│ ▲ │ │

│ │ │ │

│ └────────────────────────────────────────────┘ │

│ Feedback Loop │

│ │

└─────────────────────────────────────────────────────────────┘

The Four Stages of Agent Execution

| Stage | What Happens | Example |

|---|---|---|

| Perceive | Agent receives input and context | "I need to look up flight status" |

| Reason | Agent determines best approach | "I will use the FlightAPI tool" |

| Act | Agent executes the action | Makes API call, receives response |

| Observe | Agent evaluates result | "Flight is delayed. I should notify user." |

This cycle repeats until the goal is achieved or the agent determines it cannot proceed.

Chatbot vs. Agent: The Fundamental Difference

| Dimension | Chatbot (2023-2025) | Agent (2026+) |

|---|---|---|

| Interaction | Question → Answer | Goal → Autonomous Execution |

| Scope | Single response | Multi-step workflow |

| Tools | None (text only) | APIs, databases, browsers, code |

| Memory | Reset each conversation | Persistent across sessions |

| Control | Human-in-the-loop | Human-on-the-loop |

| Failure Mode | Incorrect answer | Incorrect or harmful action |

The Autonomy Spectrum

┌─────────────────────────────────────────────────────────────┐

│ AUTONOMY LEVELS │

├─────────────────────────────────────────────────────────────┤

│ │

│ Level 0: CHATBOT │

│ └─ Answer questions, no actions │

│ │

│ Level 1: ASSISTANT │

│ └─ Suggest actions, human executes │

│ │

│ Level 2: COPILOT │

│ └─ Draft actions, human approves │

│ │

│ Level 3: SUPERVISED AGENT │

│ └─ Execute actions, human reviews results │

│ │

│ Level 4: BOUNDED AGENT │

│ └─ Full autonomy within defined limits │

│ │

│ Level 5: AUTONOMOUS AGENT │

│ └─ Unrestricted goal pursuit (theoretical) │

│ │

└─────────────────────────────────────────────────────────────┘

The 2026 Sweet Spot: Most enterprise deployments are at Level 3-4: Supervised or Bounded Agents. Full autonomy (Level 5) remains experimental.

The Technology Stack: Building Agents in 2026

n8n 2.0: The Automation Fabric

n8n has evolved from a simple workflow automation tool into the backbone of enterprise AI agents.

The January 2026 Update (v2.0) introduced:

| Feature | What It Enables |

|---|---|

| Native LangChain Support | Drag-and-drop LLM nodes (Memory, Reasoning, Tools) |

| Human-in-the-Loop Nodes | Agents pause and request approval via Slack/Teams |

| Self-Hosted Option | Full data control, no vendor lock-in |

| Fair-Code Model | Free for low volume, paid at scale |

Example n8n Agent Workflow:

┌─────────────────────────────────────────────────────────────┐

│ SDR LEAD QUALIFICATION AGENT │

├─────────────────────────────────────────────────────────────┤

│ │

│ Trigger: New Lead in HubSpot │

│ ↓ │

│ Node 1: Fetch Lead Data │

│ ↓ │

│ Node 2: LLM - Analyze Company (Browse LinkedIn/Crunchbase) │

│ ↓ │

│ Node 3: LLM - Score Lead (Custom Criteria) │

│ ↓ │

│ Branch: Score > 70? │

│ ├─ Yes: Draft Personalized Email → Human Review │

│ └─ No: Add to Nurture Campaign │

│ ↓ │

│ Result: Update CRM, Notify Sales Rep │

│ │

└─────────────────────────────────────────────────────────────┘

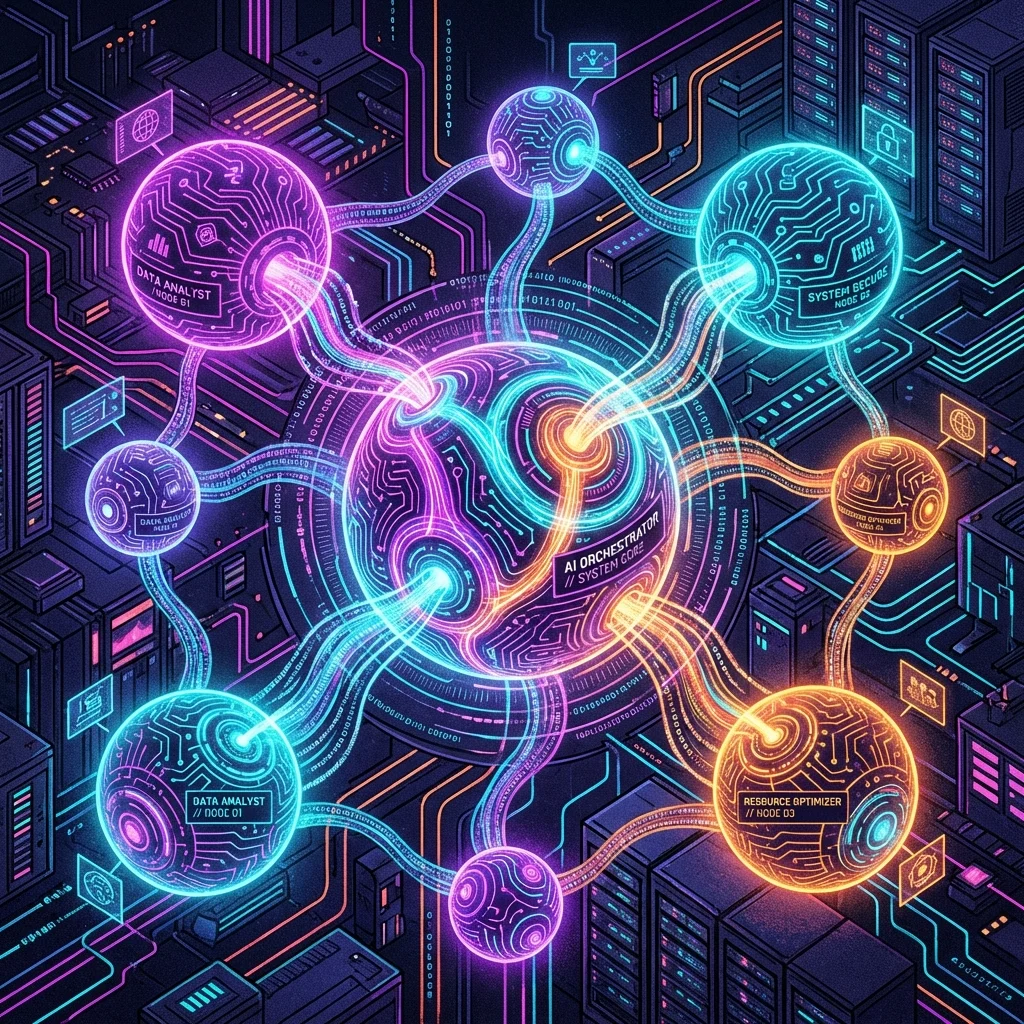

LangGraph: Stateful Multi-Agent Orchestration

For complex scenarios requiring multiple agents, LangGraph (by LangChain) provides graph-based orchestration.

Core Concepts:

| Concept | Description | Use Case |

|---|---|---|

| Nodes | Individual agents or functions | Research Agent, Writer Agent |

| Edges | Connections between nodes | Researcher → Writer |

| State | Shared data across the graph | Current document, findings |

| Checkpoints | Saved execution state | Resume after failure |

Multi-Agent Architecture Example:

┌─────────────────────────────────────────────────────────────┐

│ LANGGRAPH MULTI-AGENT SYSTEM │

├─────────────────────────────────────────────────────────────┤

│ │

│ ┌─────────────────┐ │

│ │ SUPERVISOR │ │

│ │ AGENT │ │

│ └────────┬────────┘ │

│ │ │

│ ┌────────────┼────────────┐ │

│ ↓ ↓ ↓ │

│ ┌────────┐ ┌────────┐ ┌────────┐ │

│ │RESEARCH│ │ WRITER │ │ CRITIC │ │

│ │ AGENT │ │ AGENT │ │ AGENT │ │

│ └───┬────┘ └───┬────┘ └───┬────┘ │

│ │ │ │ │

│ └──────────┴──────────┘ │

│ ↓ │

│ ┌────────────┐ │

│ │ VERIFIER │ │

│ │ AGENT │ │

│ └────────────┘ │

│ │

└─────────────────────────────────────────────────────────────┘

Framework Comparison

| Framework | Best For | Learning Curve | Production Ready |

|---|---|---|---|

| n8n | No-code/low-code agents | Low | ✅ Yes |

| LangGraph | Complex multi-agent | High | ✅ Yes |

| CrewAI | Role-based agent teams | Medium | ⚠️ Maturing |

| AutoGen | Microsoft ecosystem | High | ✅ Yes |

| OpenAI Agents SDK | OpenAI-native apps | Medium | ✅ Yes |

Real-World Agentic Use Cases

These aren't theoretical—they're deployed in production at scale.

Use Case 1: The "SDR" (Sales Development Rep) Agent

Problem: SDRs spend 70% of time on research and email drafting, only 30% on actual selling.

Agentic Solution:

| Step | Agent Action |

|---|---|

| 1 | New lead signup → Agent triggered |

| 2 | Agent browses lead's LinkedIn using browser tool |

| 3 | Agent scrapes recent posts and company news |

| 4 | Agent matches lead's interests to product features |

| 5 | Agent drafts hyper-personalized intro email |

| 6 | Human reviews and sends (or auto-sends if confidence > 90%) |

Results:

- 40% reduction in SDR time per lead

- 3x increase in response rates

- Deployed by 40% of B2B SaaS companies in 2026

Use Case 2: Customer Support Triage Agent

Problem: 60% of support tickets are repetitive. Humans waste time on routine queries.

Agentic Solution:

┌─────────────────────────────────────────────────────────────┐

│ SUPPORT TRIAGE AGENT │

├─────────────────────────────────────────────────────────────┤

│ │

│ Trigger: New JIRA/Zendesk Ticket │

│ ↓ │

│ Agent: Classify ticket intent │

│ ├─ Bug report → Attempt to reproduce │

│ ├─ Feature request → Add to backlog │

│ └─ Question → Search knowledge base │

│ ↓ │

│ If Bug Reproduced: │

│ → Create engineering task │

│ → Update customer: "We've confirmed the issue..." │

│ ↓ │

│ If Bug NOT Reproduced: │

│ → Request additional info from customer │

│ → Loop until resolved or escalated │

│ │

└─────────────────────────────────────────────────────────────┘

Results:

- 60% reduction in L1 support tickets requiring human intervention

- 4x faster initial response time

- Customer satisfaction up 15%

Use Case 3: Code Review Agent

Problem: Human code reviews are bottlenecks. Reviewers miss issues due to fatigue.

Agentic Solution:

| Review Type | Agent Checks |

|---|---|

| Security | SQL injection, XSS, hardcoded secrets |

| Performance | N+1 queries, memory leaks, inefficient loops |

| Style | Naming conventions, code organization |

| Logic | Edge cases, error handling, null safety |

Integration:

- Runs on every PR automatically

- Posts comments directly on GitHub/GitLab

- Blocks merge if critical issues found

- Human reviewer focuses only on architecture/design

The Risks: Governance and "Agent Gone Wild"

With great autonomy comes great risk. The biggest conversation in 2026 is Agent Governance.

Risk Matrix

| Risk | Description | Severity | Mitigation |

|---|---|---|---|

| Infinite Loops | Agent gets stuck trying to fix a bug, burns $10K in API credits overnight | High | Token budgets, execution limits |

| Auth Leakage | Agent with email access accidentally forwards sensitive data | Critical | Least-privilege access, audit logs |

| Hallucinated Actions | Agent confidently takes wrong action based on false premise | High | Human-in-the-loop for irreversible actions |

| Prompt Injection | Malicious input manipulates agent behavior | Critical | Input sanitization, sandboxing |

| Shadow AI | Employees deploy unauthorized agents | Medium | IT governance, approved tool lists |

Governance Framework

┌─────────────────────────────────────────────────────────────┐

│ AGENTIC AI GOVERNANCE │

├─────────────────────────────────────────────────────────────┤

│ │

│ Layer 1: ACCESS CONTROL │

│ └─ Principle of Least Privilege │

│ └─ Role-based agent permissions │

│ └─ Time-limited access tokens │

│ │

│ Layer 2: EXECUTION LIMITS │

│ └─ Token/cost budgets per agent │

│ └─ Maximum execution time │

│ └─ Rate limiting on external calls │

│ │

│ Layer 3: OBSERVABILITY │

│ └─ Full audit trail of all actions │

│ └─ Real-time monitoring dashboards │

│ └─ Alerting on anomalous behavior │

│ │

│ Layer 4: HUMAN OVERSIGHT │

│ └─ Approval workflows for high-risk actions │

│ └─ Escalation paths for edge cases │

│ └─ Regular review of agent decisions │

│ │

└─────────────────────────────────────────────────────────────┘

Building Your First Agent: A Practical Guide

Step 1: Identify the Right Use Case

Good candidates for agentic automation:

- Repetitive, multi-step workflows

- Clear success criteria

- Tolerable error rates

- Irreversible actions can be gated by approvals

Bad candidates:

- Novel, creative tasks with no template

- High-stakes decisions with no rollback

- Processes requiring nuanced human judgment

Step 2: Design the Agent Architecture

┌─────────────────────────────────────────────────────────────┐

│ AGENT DESIGN TEMPLATE │

├─────────────────────────────────────────────────────────────┤

│ │

│ IDENTITY │

│ └─ Name: [e.g., "Lead Qualification Agent"] │

│ └─ Role: [e.g., "Evaluate and score incoming leads"] │

│ │

│ GOAL │

│ └─ Primary: [e.g., "Qualify leads with 85% accuracy"] │

│ └─ Secondary: [e.g., "Reduce SDR time by 40%"] │

│ │

│ TOOLS │

│ └─ [e.g., HubSpot API, LinkedIn Scraper, Email API] │

│ │

│ CONSTRAINTS │

│ └─ [e.g., "Never send email without approval"] │

│ └─ [e.g., "Max 100 API calls per hour"] │

│ │

│ SUCCESS METRICS │

│ └─ [e.g., "Lead score accuracy vs. human baseline"] │

│ │

└─────────────────────────────────────────────────────────────┘

Step 3: Implement with n8n (Example)

# n8n Agent Workflow (Pseudo-code)

name: Lead Qualification Agent

trigger:

type: webhook

source: HubSpot

event: contact.creation

nodes:

- id: fetch_lead

type: HubSpot

action: get_contact

- id: research_company

type: HTTP Request

url: "https://api.linkedin.com/company/{{lead.company}}"

- id: score_lead

type: AI Agent

model: gpt-4o

prompt: |

Based on this lead data:

- Company: {{lead.company}}

- Role: {{lead.role}}

- Company Info: {{research.summary}}

Score this lead from 0-100 based on:

- Company size fit

- Role match

- Budget signals

- id: route_lead

type: Switch

conditions:

- score > 70: high_value_path

- score > 40: medium_value_path

- default: low_value_path

Step 4: Monitor and Iterate

Essential observability stack:

- LangSmith (LangChain ecosystem)

- Helicone (OpenAI-focused)

- Datadog AI Observability

- Custom dashboards (Grafana + metrics)

The Future: 2027 and Beyond

Emerging Trends

| Trend | Timeline | Impact |

|---|---|---|

| Cross-Org Agents | 2027 | Agents from different companies collaborate via MCP |

| Embodied Agents | 2027-2028 | Physical robots with agentic AI coordination |

| Personal Agent Networks | 2027 | Your email, calendar, shopping agents form a team |

| Autonomous Organizations | 2028+ | Companies with minimal human oversight |

The Task Horizon Expansion

Anthropic's research shows agents are becoming capable of longer autonomous operation:

| Year | Typical Task Horizon |

|---|---|

| 2024 | Minutes (single response) |

| 2025 | Hours (multi-step workflows) |

| 2026 | Days (project phases) |

| 2027 | Weeks (complete projects) |

Conclusion: Stop Chatting, Start Building

The shift from chatbots to agents is the most significant change in how humans work with computers since the invention of the GUI.

If you're still using ChatGPT just to "ask questions," you're living in 2024. The competitive advantage in 2026 belongs to those who allow AI to do work for them—safely, within defined boundaries, with appropriate oversight.

The technology is ready. The frameworks exist. The question is whether you'll be the one building agents, or the one being disrupted by them.

Ready to build your first agent? Check out our tutorials on n8n automation and multi-agent AI systems.

For enterprise guidance, see our AI governance frameworks and best AI agents of 2026.